Model decomposition is the process of dividing the model into logical or functional units. In today’s video, I walk through the basic, high level model decomposition.

White-boarding from home

Before this blog existed I wrote a LinkedIn blog post “What’s on your whiteboard“. Whiteboards, as a rapid iterating development are a favorite of co-workers around the world, so what can we do now working remotely to replicate the “whiteboard experience?”

Gather round the whiteboard…

Before we figure out how to replicate a whiteboard remotely (1) we need to talk about why we whiteboard.

- Architecture & Stereotypes: (2) Outlining at a conceptual level how the product works.

- Free-body diagrams: (3) Showing the interactions between forces

- Note-taking: Sometimes the white board serves as a running checklist of what has been discussed and what has been agreed to.

- Timelines: Writing out timelines for the project development.

Your virtual whiteboard

In many posts I have written “with Model-Based Design, a single model is evolved throughout the design process”. Let’s take a step back and think now of the system. Can we start using and evolving a system throughout the design process?

Model-Based Design has an answer to the Architecture & Stereotype use case; the collaborative (4) use of tools like System Composer or UML diagrams. What is more, once this collaborative use is established the whiteboard isn’t “erased” at the end of the session, it becomes part of the product.

One thing on note

For mature organizations with an in-place requirements and bug tracking process, “notes” can directly be added to the requirements or bug tracking infrastructure. While not as “easy” as the early white boarding example, capturing these actions during the meeting reduces the possibility of “transcription errors”.

Footnotes

- I do know that many video conference tools offer “whiteboards” but with few exceptions I have not found those to be good environments to rapid prototype in.

- Half the time when I think “stereotypes” I think of Cambridge Audio, Sony and Panasonic.

- Other fields have their equivalent to FBDs, for now I am using that as a generic term.

- The key word though is collaborative, if it is just one person “sketching” the diagram without feedback then while still a powerful tool it is not a “white board” experience.

Critical conditionals: initialization, termination and other conditional functions

Like many people, the COVID lock down has given me time to practice skills; I have been spending time practicing my (written) German; so if you skip to the end you can see this post “auf Deutsch”

Back to the start

Stoplights provide us with information, Green = Go (Initialize), Red = Stop (Terminate) and Yellow, according to the movie Starman, means go very fast. A long term question, within the Simulink environment has been, “what is the best way to perform initialization and termination operations?”

Old School: Direct Calls in C

Within the Simulink pallet, the “Custom Code” blocks allow you to directly insert code for the Init and Termination functions. The code will show up exactly as typed in the block. The downside of this method is that the code does not run in Simulation. (Note: This can also be done using direct calls to external C code. In these cases, getting the function to call exactly when you want can be difficult)

State School: Use of a Stateflow Diagram

A Stateflow Chart can be used to define modes of operation; in this case, the mode of operation is switched either using a flag or an event. This approach allows you to call any code (through external function calls or direct functions) and allows for reset and other event driven modes of operation. The downside to this method is that you need to ensure that the State flow chart is the first block called within your model (this can be done by having a function caller explicitly call it first).

New School: A very economical way

The “Initialization,” “Termination” (and “Reset“) subsystems are the final recommended methods for performing these functions. The code for the Initialization and Termination variants will show up in the Init and Term section of the generated code. Reset functions will show up in unique functions based off of the reset event name.

Within this subsystem you can make direct calls to C code, invoke Simulink or MATLAB functions and directly overwrite the state values for multiple blocks.

Best practices for Init and Term

MATLAB and Simulink have default initialization and termination functions for the model and the generated code. The defaults should only be overridden when the default behavior is incorrect for your model. There are 4 common reasons why a custom Init / Term functions are required; if you don’t fit into one of these, determine if you should be using this.

- Startup / Shutdown physical hardware: for embedded systems with direct connections to embedded hardware, the Init / Term functions are required. (Note: it is a best practice to try to have your hardware systems in models external to the control algorithms. This allows you to “re-target” your control algorithm to different boards easily

- One time computations: Many systems have processor intensive computations that need to be performed prior to the execution of the “body” of the model.

- External data: as part of the startup / shut down process, data may need to be saved to memory / drive.

- You just read: a blog and you want to try things out… I’m glad you want to try it, but review the preceding 3 reasons.

Bonus content

As promised, the results of practicing my (written) German skills. Und so

Ampeln liefern uns Informationen: Grün = Los (Initialisieren), Rot = Halt (Beendigungsvorgänge) und Gelb bedeuten laut Film Starman, sehr schnell zu fahren. Eine langfristige Frage in der Simulink-Umgebung lautete: “Was ist am besten, um Initialisierungs- und Beendigungsvorgänge durchzuführen?”.

Old School: Direct Calls in C

Innerhalb der Simulink -Palette können Sie mit den Blöcken “Benutzerdefinierter Code” direkt Code für die Funktionen Init und Beendigungsvorgänge existiert. Der Code wird genau so angezeigt, wie er im Block eingegeben wurde. Das Problem bei dieser Methode ist, dass der Code in Simulation nicht ausgeführt wird. (Eine dinge: Dies kann auch durch direkte Aufrufe von externem C-Code erfolgen. In diesen Fällen kann es schwierig sein, die Funktion genau dann aufzurufen, wenn Sie möchten)

State School: Use of a Stateflow Diagram

Ein Stateflow Chart kann verwendet werden, um Betriebsmodi zu definieren. In diesem Fall wird der Betriebsmodus entweder mithilfe eines Flags oder eines Ereignisses umgeschaltet. Mit diesem Ansatz können Sie einen beliebigen Code aufrufen und zurücksetzen und andere ereignisgesteuerte Betriebsmodi ausführen. Die Einschränkung diesmal Methode ist, dass Sie sicherstellen müssen, dass das Stateflow-Diagramm der erste Block ist, mit dem in Ihrem Modell aufgerufen wird (dies kann erfolgen, indem ein Funktionsaufrufer es explizit zuerst aufruft).

New School: A very economical way

Die Modellblöcke “Initialisieren”, “Beendigungsvorgänge” (und “Zurücksetzen”) sind die endgültige Methode zur Ausführung dieser Funktionen. Der Code für die Initialisierungs- und Beendigungsvorgänge Optionen wird im Abschnitt “Init” und “Term” des generierten Codes angezeigt.

Rücksetzfunktionen werden in eindeutigen Funktionen angezeigt, die auf dem Namen des Rücksetzereignisses basieren.

Innerhalb dieses Subsystems können Sie C-Code direkt aufrufen, Simulink- oder MATLAB-Funktionen aufrufen und die Zustandsraum Werte für mehrere Blöcke direkt ersetzen.

Best practices for Init and Term

MATLAB und Simulink verfügen über standardmäßige Initialisierungs- und Beendigungsfunktionen für das Modell und den generierten Code. Es gibt vier häufige Gründe, warum benutzerdefinierte Init / Term-Funktionen erforderlich sind.

- Physische Hardware starten / herunterfahren: Für eingebettete Systeme mit direkten Verbindungen zu eingebetteter Hardware sind die Init / Term-Funktionen erforderlich (Eine dinge: diese beste Vorgehensweise ist Ihre Hardwaresysteme außerhalb der Steuerungssysteme zu haben. Auf diese Weise konnen Sie Ihre Software schnell nue Hardware portieren.

- Einmalige Berechnungen:Berechnungen erforderlich vor dem Start des Steueralgorithmus .

- Externe Daten: Daten, die in externe Quellen geschrieben werden.

What is next for Model-Based Design?

I have drawn the software design “V” roughly 1e04 times.(1) Over time, the scope of what is in the V for Model-Based Design has increased. Sometimes I think there should be a Model-Based Design equivalent to Moore’s Law (4); perhaps the “delta V”.(5)

In the arms of the V

Often when asked “what’s next?”, deeper is the answer. Improve a product, improve the process, increase (or decrease) the outputs; the less obvious answer is what can be brought into the embrace of the V.

As the scope of Model-Based Design expands, it has done so in a technology first, workflow second hodgepodge.(6) Uniquely what we are now seeing is the embrace of model based systems engineering which focuses on workflow first and tools second.

What is a system?

Definitions of systems are generally unsatisfying as there is not consensus on what comprises a system; however the definition I like best is…

Open and Closed Systems: A system is commonly defined as a group of interacting units or elements that have a common purpose. … The boundaries of open systems, because they interact with other systems or environments, are more flexible than those of closed systems, which are rigid, and largely impenetrable

Come together, right now, over???

I doubt the Beatles were thinking of software systems when they wrote this song (7) but it gets to the heart of why systems are important. They give people a place to “come together.” No model is, or should be, an island.(8) But how do we come together? The answer is through abstraction.

How does the centipede walk? (9)

The objective of a systems integration environment is to provide an abstracted language so that software, hardware, controls, physical modelers… can all exchange “information” without having to understand the heart of each other’s domain.

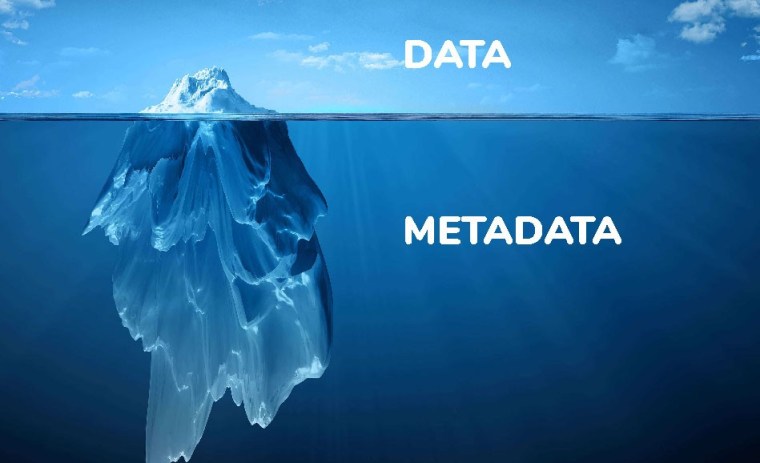

At a minimum, a system acts as both a “living” ICD document and as an ICD verification tool. When working in the metadata rich Model-Based Design environment a system integration tool can do much more.

Used well, metadata is an asset, used poorly it can sink your ship.(10) Use of a systems integration tool provides a natural “organizational” layer on top of the metadata. System interactions, data dependencies, system wide requirement coverage all can be accessed from within a system level model.

The Worm Ouroboros: Model-Based Design or Model Based System Engineering

I’ve been asked “What is the boundary between MBD and MBSE”? Right now where it lies is an open question (11) and not at present a fruitful question. Five years ago there was a clear answer, and five years from now we will again have clarity, but right now we are in a land rush (12) where developers can explore new ideas and stake out new domains. What is next? A better understanding of what is.

Footnotes

- I came to this number using a Fermi Estimation methodology.

Years in MBD Community ~ 20, Customer per year ~ 50, Drawings per customer ~ 10

Years mentoring in MBD ~ 10, mentored per year 4, drawings per mentored ~ 20 (2)

tot = 20 * 50 * 10 + 10 * 4 * 20 = 10,800. (3) - In the end, the mentored end up drawing it and having their own way of talking about the design V.

- The Fermi estimate may make you think that the 800 from mentoring is not important; however, if I count the number of times the people I taught in turn draw this, then I may hit another order of magnitude.

- It always seemed that Moore’s law should be called “Moore’s extrapolation.”

- You could think of each software release as an instantaneous acceleration.

- Technology first, workflow second: a tool for solving a specific problem is developed; how that tool fits into the overall workflow comes later (along with refinements to the tool).

- And I doubt it was an endorsement of open source software.

- John Donne poem “No man is an Island” : (with apologies)

No man is an island entire of itself; every man

is a piece of the continent, a part of the main;

No block is a model entire of itself; every block

is a peace of a subsystem, a call from the main();

- The Centipede’s Dilemma, is a something organizations hit when they start to examine how they do something. Much like the Coyote who can run on air until they look down.

- Though I suppose in the case of the titanic they had the data to go by, it a structural problem.

- The image of an “open system” was chosen to lay the ground work for this section.

- For those of you less familiar with United States history, a “land rush” refers to a time period in American history when settlers could “ride and claim” a parcel of land. When I was a child this was taught as a positive moment in history; as an adult, it is easy to see the casualties and costs to Native Americans.

Video blog: Avoid the pitfalls of test vector generation

This post is a companion blog to the earlier post on reuse. Here I will demonstrate how to generate scenario-based test vectors. Enjoy!

I think I’ve written this before: Revisiting Reuse

I’ve seen the question many times “Why do you care so much about reuse?” So giving my reusable answer I say “when you do something from scratch you have a new chance to make the same mistakes.” (1) If you look at your daily work, you will see we already reuse more than we realize.

When I go looking for images for “reuse” what I find most of the time are clever projects where you take a used plastic bottle and make it into a planter, or an egg carton becomes a place to start seeds.(2) What I want to talk about today is reuse for the same purpose, e.g. reuse like a hammer, a tool that you use to pound and shape the environment over and over.(3)

Hammer time(4)

Why do I care about reuse? Reuse is a company’s greatest asset; it is the accumulated knowledge over time. No one talks about “reusing a wheel” but that is what we are doing, we are reusing a highly successful concept.

So how do we get into the wheel house?(5) The first step is to identify a need, something that you (or ideally many people) need to use / do regularly.

When writing tests I frequently need to get my model to a given “state” before the test can begin. Creating the test vectors to do that manually is time consuming and error prone.

Once you have done that, think if there is a way that the task can be automated.

The solution I found was to leverage an existing tool, Simulink Design Verifier, and use the “objective” blocks to define my starting state. The tool then finds my initial test vectors to get me to where I want to be.

As described right now, this is a “proto-wheel.” It is a design process that I use (and have taught customers to use) but it is not fully reusable (yet). Why is that?(6)

Horseshoes and hand grenades(7)

This fails the “wheel test” in 2 fundemental ways

- It isn’t universal: every time I use it I need to recreate the interface, e.g. manually define the goal state

- It may not work: there are some conditions for which this approach will not find a solution.

Becoming a wheelwright: doing it right

If you want this to become “wheel like” you need to address the ways in which it fails. Here is how I plan to do that.

Create a specification language: by creating a specification language I, and my customers, will be able to quickly define the target state. Further, the specification language will ensure that errors in specification do not enter into the design

Analyze the design space: when a tool doesn’t work there is a reason; in some cases it can be deduced through mathematical analysis, in others, through analysis of failure cases. I am currently “tuning the spokes” on this wheel.

But will it roll? What is my (your) role (8) in making it happen?

In the end, a good idea and strong execution is not enough. The key to widespread reuse is getting it used by people outside the original (in this case testing) community. Until you do that it is only a specialty hammer.(9)

Getting those outside people to adopt a new tool or method is about getting people to care about the problem and the solution.(11)

Picking up a “hammer” is a 4 step process

- Know you have a problem: sometimes when you have been done something one way for a long time you don’t even realize there is an issue.

- Know there is a solution: if you don’t know hammers exist you will keep hitting things with rocks. It gets things done but your hand hurts.

- Have time to try out the solution: even the best hammer can be slower than your trusty rock the first few times you use it.

- Give the hammer maker time to make you a better hammer: chances are even the best tool will need refinement after the first few users.

Final comments: Why now?

Reuse reduces the introduction of errors into the system. Remember, “when you do something from scratch you have a new chance to make a the same mistake.”(13) And when working remotely during Covid, the chance to do so increases. Start looking at the tasks you do regularly and ask the need and automation questions.

Footnotes

- My wife Deborah always raves about my strawberry rhubarb crisps. But even after making over 100 of them over our 25 years together, I still can get the sugar to corn starch to lemon ratios a bit wrong if I don’t watch what I am doing.

- From a total energy usage I do question if we would be better off just recycling the bottle and egg carton.

- I started to think of the song “If I had a Hammer.” As it is a Pete Seeger song, it isn’t surprising that it is about civil and social rights. As a kid, the line “I’d hammer out love” seemed odd to me; hammers were blunt tools. When I got older I saw the other uses of hammers, to bend and shape things, to knock things into place. When you write software, write it like it’s a tool that can do all the things you need it to do.

- As a child of the 80’s “U Can’t Touch This” (hammer time) was at one time on nearly constant replay on the radio.

- First, make sure you are developing in an area that you know well so you know what needs to be done over and over.

- When I started this blog, I referenced the serial novels of the 19th Century. There are times I am tempted to end a blog post on a cliff hanger, but not today.

- When I first wrote the section title I thought “this must be a modern phrase” as while horse shoes have a long history, hand grenades are relatively new (e.g. 200 year old?) I was wrong. The earliest version can be traced back to Greek fire (or earlier)

- Homonyms, as a lover of puns, have always been something I have loved.

- If you thought I was done with the hammer metaphor you were wrong, I’m bringing it back at the end to drive my point home(11)

- Because that is what you do with hammers

- A blog post about this methodology would be one way of getting people to know about it.

- And in the spirit of reuse, I reused this from the start

Baskin Robbins: 31 flavors of models

Perhaps it is a coincidence, but, when I looked up the definition of “variant” online the example sentence was about an illness (1). The concept behind variants is appealing; within one model hierarchy, include multiple configurations of your target. But from a testing perspective, well….

If you go to Baskin Robbins ice cream, home of 31 flavors, and ordered a 2 scope cone you would have (31!/(31-2)!) 930 patterns, meaning it would take you almost 18 years to try them all if you did this once per week(2). So if you have an integration model with 8 referenced models, each model having 3 variants; well, just how many weeks do you want to test for?

Related and unrelated variants

To simplify the design and testing process the variants in a model should be related, e.g. if you have a variant for your car, manual or automatic transmission, then a related variant could be for the wave plate (auto) versus clutch (manual) models. An unrelated variant could be for the HVAC system.

Please note, the ice-cream references were supposed to end in the last paragraph, but the “which of these don’t belong” image I found had an ice-cream cone. That was not my intention but it is too late now.

Defining your inclusion matrix

What this matrix shows us (3) is which model variants

- Are allowed with other variants

- Are required by other variants

- Are not impacted by other variants

Validate the matrix

There are two primary methods for validating the inclusion matrix: inlined and pre-computed. With the inlined version, the full set of conditions for a variant to be selected is coded into the variant selection process. With the pre-computed version, the variant logic exists external to the component and the only final value is evaluated in the component.

As you would expect, there are pros and cons to both approaches (4). Having the logic in the component makes it easier for the developer of the module to understand what is going on; however it makes it more difficult for a system developer to have a global view of the variants. On balance the external computation is more likely to provide robust process.

Variants versus reuse

In our first example, an automatic versus manual transmission, this was clearly a variant; the models would be significantly different. But what if we were talking about a 4 speed versus a 5 speed transmission? Could that model, with some refactoring and change of data, be reused?

The key thing to keep in mind is that while a re-used model will require additional tests cases, many of the “basic” tests would already exist and could be modified for the reused data. On the other hand, a model variant will require a whole (or nearly whole) new set of tests.

Why now?

Why is this blog post part of the “impact of COVID” collection, as this is good advice at anytime. The answer is simple: as we social distance, the informal communication that makes poor design (5) tolerable is less prevalent.

This example COVID drives

Adopt this and thrive. (6)

Footnote

- The jest here is that the over use of variants can lead to multiple bugs in your software due to the increased complexity

- In reality this would take far less time as there are some combinations that should never be considered; for instance, anything with coconut.

- The Matrix (the movie) also shows us that Hollywood had (and has) an ill informed conception of what computer programming / hacking really looks like.

- All design is about the pros and cons, there are few things that are 100% “good”

- Informal communication makes that poor design tolerable, but it doesn’t mean that anyone is happy.

- I’m not sure if this is an example of “doggerel” or “bearerel” poetry. Hopefully you can bear with me.

Testing your testing infrustructure

Ah tests! Those silent protectors of developments integrity, always watching over us on the great continuous integration (CI) system in the clouds. Praise be to them and the eternal vigilance they provide; except… What happens to your test case if your test infrastructure is incorrect?

Quis custodiet ipsos custodes(1)

There are 4 ways in which testing infrastructure can fail, from best to worst

- Crashing: This is the best way your test infrastructure can fail. If this happens the test ends and you know it didn’t work.

- False failure: In this case, the developer will be sent a message saying “fix X”. The developer will look into it and say “your infrastructure is broken.”(2)

- Hanging: In this case the test never completes; eventually this will be flagged and you will get to the root of the problem

- False pass: This is the bane of testing. The test passes so it is never checked out.

False passes

Prevention of false passes should be a primary objective in the creation of testing infrastructure; the question is “how do you do that?”

Design reviews are a critical part of preventing false passes. Remember, your testing infrastructure is one of the most heavily reused components you will ever create.

While not preventing false positives, adherence to standards and guidelines in the creation of test infrastructure will reduce common known problems and make it easier to review the object

There are 3 primary types of “self test”

- Golden data: the most common type of self test is to pass known data that either passes or fails the test. This shows if it is behaving as expected but can miss edge cases(3)

- Coverage testing: Use another tool to generate coverage tests. If this is done, then for each test vector provided by the tool provide the correct “pass or fail” result.

- Stress and concurrency testing: For software running in the cloud, verification that the fact that it is running in the cloud does not cause errors(4)

- Time: Please, don’t let this be the way you catch things… Eventually because other things fail, false positives are found through root cause analysis.

Final thoughts

In the same way that nobody(5) notices water works until they fail, it is common to ignore testing infrastructure. Having a dedicated team in support is critical to having a smooth development process.

Footnotes

- In this I think we all need to take a note from Sir Samuel Vimes and watch ourselves.

- There is an issue here; frequently developers will blame the infrastructure before checking out what they did. Over time the infrastructure developers “tune out” the development engineers.

- Sometimes the “edge cases” that golden data tests miss are mainstream but since they were not reported in the test specification document, they are overlooked by the infrastructure developers.

- The type of errors seen here are normally multiple data read / writes to the same variable or licensing issues with tools in use.

- And if you look at it with only one eye, failures will slip passed.

The next generation of Model-Based Design

I predict that in just one year the state of Model-Based Design could (1) see a jump equivalent to 7 years of normal progress; COVID-19 has brought to the forefront a needed set of transformations that will reshape the processes and infrastructure that define Model-Based Design. It gives us an opportunity to realize a new vision both for these times and beyond.

In a painting, every brush stroke(2) matters; but it is only in the collection of the strokes that the full image is revealed. Fortunately, in the software development process, you do not need all of the “strokes” to see the full picture; each improvement stroke provides a return on investment. By intelligently clustering the strokes together you see a multiplicative effect.

The objective of this new series of blogs is to provide the strokes and to define the clusters so the order of adoption can be optimized.

The obvious change due to COVID-19 is working remotely; this change exposes multiple areas where Model-Based Design should be improved. Cultural needs feed into process changes, which then mandate enhanced automation; these are the “strokes” that we will look at.

The clusters

I want to introduce a few of the clusters I have already identified as “ready” for transformation. As this blog continues, this list will be expanded and refined. For now…

The review process

Current review process depend on two things, informal communication before the actual review and the highly interactive nature of in person reviews; both of these suffer in the remote working environment. To create a better review process there are several “strokes” that are needed. Changes in Architectural style to make review easier, up-front communication through the use of ICDs, and automation to validate prior to the meeting.

Creation and validation of physical models

At first glance, the creation of physical models should not be impacted by COVID-19. If we are building our models from first principles then those principles are the same if we are in the same room or not (3). However, in practice, first principle models are not practical and simplifying assumptions need to be made, which in turn means that the model needs to be validated against real world data(4). How do you collect that data when you need to social distance? How do you validate it?

Requirements life cycle

The requirements life cycle will perhaps see the most important changes. Requirements act as the primary source of truth in the development process; as a result, having a robust, understandable requirements life cycle is critical. We will need to see improvements in the way requirements are written, tested and maintained.

The testing life cycle

Testing should be like breathing, something you do automatically to keep you alive (5). The testing life cycle is impacted by COVID in a number of ways, first there is the stress on testing infrastructure (tests need to be move to continuous integration (CI) systems). Next, there is an impact on the development of tests when the developer and the test engineer don’t sit next to each other,(6) there is less informal communication that provides bullet resistant (7) tests.

The release process

The smallest mistake early in your development process can have a butterfly effect (8) on the downstream process. The use of automation at all stages of the release process will need to change to prevent the small flaps early on leading to large problems down stream. If we follow the automation upstream we will see that there need to be cultural changes that support people in the use of automation.

The strokes

The strokes are updates to classical Model-Based Design topics; areas where the existing shortfalls are exposed by the current working conditions.

- Cultural

- Improving formal documentation

- Enhancing and simplifying informal communication

- Meeting your meeting responsibilities

- Welcome aboard, on-boarding at a distance

- …

- Process & “Style”

- Workflows

- One of these things is not like the others: Version controls

- What I’m expecting: writing requirements

- Follow the leader, improving the traceability process

- Get your MBD license: Certification time!

- …

- Architectural changes

- Mega fauna models : “Right sizing” your models

- Come together, right now: model integration

- They have a word for that in… Selecting the correct modeling language

- Multi-generation code development: integrating legacy code

- Put on your model reorg boots!

- Baskin Robbins 31 flavors of models

- …

- Development changes

- Workout routine for physical models

- How do you know what you know? Validation methodologies

- Polymorphic functionality

- I think I’ve written this before! Revisiting reuse

- Testing changes

- A shock to your testing cylce

- Send in the robots: test automation

- The ABCs of testing interfaces

- No bubbles: standardized testing

- …

- Workflows

- Automation

- Look at this cool thing I wrote: when and how to automate

- Compound interest: return on investment for automation

- The ice cream problem: bullet proof automation

- …

I will be posting blogs on these topics about once per week.

Footnotes

- I write “could” because all changes are dependent on taking action; now is the time to start.

- I have often wondered to what degree the Pointillism school of art influenced early computer graphics which were sprite based; I also have wondered if the term “sprite” is in part, due to the number of early fantasy computer games that included sprites.

- I tried for a long time to think of a “Spooky action at a distance” joke that would fit in here but wasn’t able to. Perhaps you could say after working as part of a team for long enough you know how everyone thinks, so you are “developing at a distance.”

- Even when you don’t need to simplify the model, real world validation is often recommended for complex systems.

- We could push this analogy pretty far; under stress you breathe/test more heavily. If you train your systems you can run much harder before you are out of breath

- In the best cases, organizations have separate development and testing roles. When they are combined into one, developer is the test; you are sitting next to yourself and sitting alone which can lead to developer bias in the creation of tests.

- I write “bullet resistant” not “bullet proof” in recognition that to get to “bullet proof” is part of the process of validating your tests (see this on developing testing)

- The more common use of the butterfly effect relates to chaos theory, e.g. a butterfly flaps its wings and triggers a tornado. However when I first learned of this it came from a Ray Bradbury story, the Sound of Thunder.

Don’t ISO-late

When it comes to safety standards, such as the ISO-26262, the old adage “better late than never” can be both dangerous (1) and costly. The simplest, somewhat humorous, description of a safety critical standard that I have read is the following

- Say what you are going to do

- Do what you said you would do

- Verify that what you did matches what you said

- Generate reports

While it is fictitious in its simplicity, it gets to the heart of the matter. Safety critical processes are about being able to show that each step along the development path you both plan out what you intend to do and verify that what you did matches your intentions (plan, do, show).

If that is all

You have to do, how hard can it be? Well first, let’s talk about what it takes to “show” that you did what you said. First you need to be able to show traceability between all artifacts. This means you need a robust tool that will show the link between

- Requirements to the model

- Requirements to tests

- Test results to the model

- Test results to the requirements

- The model to the generated code

- Integration tests to requirements

- And between all the other components

Furthermore those links need to take into account the version control for all the units under test. Setting up the “hooks”(2) for all of these components is a task that needs to be done at the start of the project. There is a reason for the old joke about airplane development: “For every pound of plan you have 20 pounds of paper”(3)

Now that you have your “outline” what now(4)

Tracing the steps is just the “first” step; next, you need to validate the behavior of every tool (including your tracing tools) along the way. There are 5 basic steps for validating a software tool

- Create a validation plan: Define what it is you will be testing, under what conditions, what is the environment, who will perform the validation…

- Define system requirements: The creation of a system requirements (SRS) document breaks down into two parts; infrastructural and functional. During this time a system risk analysis document is created along with mitigation strategies.

- Create a validation protocol and test specs: Definition of both test plan (how you will test) as well as the specific test cases. The creation of a traceability(3) matrix between the plan and the test plan is created.

- Perform the testing: Execution of the tests defined in step 3

- Review and update: Collect the results of step 4, for any issues that failed the validation plan, determine if the fall under the mitigation strategies or if the plan / tool needs to be updated.

Getting your ducks in a row

Assuming you get all your ducks in a row (6) what next? The next step is to roll out how you will use those tools to your end users. Part of the software validation process is specifying how the tool is used; this can take the form of modeling guidelines (MAAB Style guide), defined test frame works or other workflow tools.

The only time is NOW

As these tasks start mounting up you can see why the “better late than never” will not work for a safety critical workflow; by the time “late” comes along, you have already been developing algorithms without the guidelines, creating artifacts without traceability and using tools that may, or may not, be certifiable.

There is, of course, good news. Processes learned in one project can be reused (as can verification artifacts) so much of what you a face is a one time upfront cost. If this is your company’s first time, you can also leverage industry best practices such as the IEC Certification Kit.

Footnotes

- People often talk about things being dangerous that are not, in fact, dangerous. However when it comes to safety standards, the failure to start early can result in critical mistakes entering into the project which can lead to injury and even death.

- Hooks is a term used to describe the infrastructure to connect different components together.

- A joke about “paper airplanes” would make sense about now.

- Since an “outline” is a “tracing”

- The longer you work in the safety critical world, the more often you will hear the term “safety critical”

- And for a software development project there will be multiple “ducks” to validate from Test vector generators, code generators, compilers, test harness builders….